Connecting To Snowflake- Table of Content

- Connecting to snowflake

- Loading Data into Snowflake

- Unloading data into Snowflake

- Steps required to connect data source into the snowflake

- Final words

Snowflake

Snowflake data cloud is a powerful data warehouse management platform. It is not an addition to already existing data warehouse management platforms. Snowflake data cloud is built on top of various cloud services such as Amazon web services, Microsoft Azure, and GCP (Google cloud platform) infrastructure.

Become a Snowflake Certified professional by learning this HKR Snowflake Training !

Connecting to snowflake

This section explains the third-party tools and technologies that form the extended snowflake ecosystem into connecting to snowflakes.

Here we are going to provide you with detailed information about installation or configurations, and snowflake client providers such as CLI, connectors, and drivers from the Snowflake ecosystem.

Snowflake Ecosystem

Under the snowflake ecosystem, we have listed a few primary 3rd party tools and technologies. They are;

- All partners and technologies (Alphabetical orders).

- Integration of the data.

- Business intelligence (BI).

- ML (machine learning) and data science

- Security and governance

- SQL development and management

- Native programmatic interfaces.

Snowflake partner connects

Here we are going to make a list of free trails to connect with Snowflakes that consist of the info about starting a free trial through the snowflake editions.

General configuration settings (that includes all clients)

This section provides detailed information about snowflake provided clients (CLI, connectors, and drivers).

The list of connectors and drivers are as follows;

- Viewing snowflake client versions.

- Snow CD (Connectivity diagnostic tools)

- Allowing Hostnames

- Limits on the Query text size

- OCSP configuration

- Snowflake client repository.

- SQL statements support preparations.

SnowSQL (CLI client) support

This includes instructions for installing, configuring, and using the SQL command-line interface clients.

The information is as follows;

- Installing SnowSQL

- Configuring the SnowSQL

- Connecting through SnowSQL

- Using the snow SQL.

Connectors and drivers

Here you will get complete detail on installation, configuration, and using the snowflake connectors or drivers for Python, Spark, ODBC, JDBC, and other connectors.

- Snowflake connectors for Python

- Snowflake connectors for Spark

- Snowflake connectors for Kafka

- Node.JS drivers.

- Go snowflake drivers

- .Net drivers

- JDBC drivers

- ODBC connectors

- PHP PDO drivers for snowflakes.

Snowflake Training

- Master Your Craft

- Lifetime LMS & Faculty Access

- 24/7 online expert support

- Real-world & Project Based Learning

Loading Data into Snowflake

This topic describes the concepts and tasking for loading the data into the snowflake database clients.

Key concepts related to data loading as well as best practices:

- Overview of the data loading.

- Summary of data loading features

- Data Loading considerations

Overview of supported data file formats and data compressions.

- Preparing to load data.

Detailed instructions for loading data in bulk using the COPY command.

- BULK loading of the data using COPY

Detailed instructions for loading data continuously using snow pipes.

- Loading data continuously using snow pipes.

Basic instruction for loading an unlimited amount of data using the web interface.

- Loading the data using the web interface (Limited amount of data).

Key concepts and tasks for executing the queries on staged data and transforming data while loading it into the tables.

- Querying data into the staged files.

- Querying the metadata for staged files.

- Transforming the data during a load.

Supported file locations

- External stages

- Internal stages

Let’s discuss each file storage location in brief;

External stages

The following are the external data storage locations that support file storage. The below are the primarily used external storage devices;

a.Amazon S3

b.Google cloud storage

c. Microsoft Azure

Users are able to upload the data into external storage devices with the help of cloud storage services.

A named external stage in a database that creates the object known as “Schema”. This type of object stores the data into files in cloud storage areas. To create the stages using the CREATE STAGE command -> access the cloud storage -> that formats the described files.

Become a Snowflake Certified professional by learning this HKR Snowflake Training in Pune !

Internal stage

Snowflakes maintain the following internal stages in your account;

- USER : a user stage is allocated for an individual user to store the file. This type of internal stage is specially designed and managed by a single user but the files can be loaded into multiple tables. Most importantly, a user stage cannot be dropped or altered/modified.

- Table : A table is also a type of internal stage that is created to store each table in the snowflake. This type of table stage is specially designed and managed by a single or multiple users, but data is loaded into a single table.

- Named : a named internal stage is a type of database object created in the schema. This type of internal stage is designed and maintained by one or more users and loaded into one or more tables. The named stage is an object type, so users are able to create/ alter/ drop or delete the objects as per their requirements.

You can create the named stage using the “CREATE STAGE” command.

Top 30 frequently asked Snowflake interview questions & answers for freshers & experienced professionals

Subscribe to our YouTube channel to get new updates..!

Unloading data into Snowflake

Below are the important factors that we are going to cover in this section. They are;

Key concepts related to unloading the data as well as best practices

- Overview of the data unloading

- Summary of the data unloading features.

- Data unloading considerations.

Overview of the supported data file formats for unloading data:

- Preparing to unload data.

Detailed instructions for unloading data in bulk using the COPY command

- Unloading data into a snowflake stage.

- Unloading data into Amazon S3.

- Unloading data into Google cloud storage.

- Unloading data into Microsoft Azure.

Get ahead in your career with our Snowflake Tutorial !

Steps required to connect data source into the snowflake

Step1: Before you login, recheck with the below factors

- Make sure that snowflakedriver.config is pointing towards a valid snowflake jdbc driver. (this step is mandatory if you have downloaded the new version of the JDBC driver).

- Then define a job that consists of the snowflake connector edge.

Step2: important procedures to consider

- Go to the job design canvas -> then double click the snowflake connector icon -> then open the stage editor.

- Then the properties window page will pop up -> now specify the value for the properties. While choosing the URL type, choose the JDBC URL string for the Snowflake database.

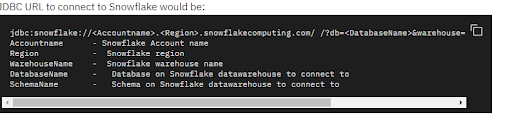

JDBC URL connect to snowflake as shown below;

- Now select yes for the “use key-pair authentication” property which is used to connect using the “key-pair authentication” mechanism.

- Provide the path where the private key file that exists on the engine tier of the private key file property ->provide the corresponding password. If the file is not encrypted , then the private key password should be kept empty.

- In the username and password property -> specify the credentials for the user authentication -> then authorize for the connection.

- Set the property from the “use connection URL” to NO, which enables the Account name, warehouse, Region, Database, and shema connect to the snowflake warehouse. Although sometimes we don't require warehouse property to establish the connection, this type of connection is required only when the subsequent operation is performed by the connector.

- Go to the “Attribute property” then specify the additional driver-specific connection properties. For example: suppose if you want the table to be created with a default value in; “Timestamp” format for the attribute Timestamp LTZ, it should contain the value TIMSESTAMP_TYPE_MAPPING = TIMESTAMP_LTZ. If the multiple properties need to be defined then each driver connection specification should have the separate syntax property_name = property_value format.

- Click the “OK” button to save the connection in snowflake.

Final words

The connection to the snowflake article is the best example of a user guide kind of manual that assists the developers in how to install the snowflake connection. This article helps developers to configure or install the snowflake connection software. Snowflake is a popular cloud data warehouse management software, so it integrates with other cloud platforms such as Amazon S3, Google Cloud, and Microsoft Azure. With the help of this article, you will be able to learn the snowflake connection with database servers, loading and unloading the data into a snowflake. For more updates, please stay tuned to our website.

Related Articles:

About Author

As a senior Technical Content Writer for HKR Trainings, Gayathri has a good comprehension of the present technical innovations, which incorporates perspectives like Business Intelligence and Analytics. She conveys advanced technical ideas precisely and vividly, as conceivable to the target group, guaranteeing that the content is available to clients. She writes qualitative content in the field of Data Warehousing & ETL, Big Data Analytics, and ERP Tools. Connect me on LinkedIn.

Upcoming Snowflake Training Online classes

| Batch starts on 27th May 2026 |

|

||

| Batch starts on 31st May 2026 |

|

||

| Batch starts on 4th Jun 2026 |

|